Ex Machina (Movie Review)

Hollywood continues to be interested in making films about the perils of AI, and Ex Machina, a 2015 sci-fi film written and directed by Alex Garland,[1] carries on this tradition. Unlike most sci-fi films though, this one was shot on a small budget ($15 million dollars) and has only three actors with meaningful dialogue. The plot is focused around a young programmer Caleb Smith (played by Domhnall Gleeson[2]) who works for a Google-like tech firm called Blue Book owned by a reclusive billionaire Nathan Bateman (played by Oscar Isaac). The first scene shows Caleb receiving an email indicating he has won the company-wide contest to spend a week at Bateman’s private estate. In the next shot, we see Caleb landed by private helicopter on Nathan’s estate, which is an ultra-modern home nestled within kilometers of pristine alpine forest. The actual building was the Juvet Landscape Hotel in Norway and is the perfect set for this film: a high-tech facility surrounded by nature which has been purportedly tamed, a sentiment which can be viewed as either enlightened or arrogant. The small cast allows for this chamber piece to focus on dialogue and deep philosophical issues pertaining to problems of consciousness, language, and intelligence.

Warning! Do not start a Promethean fire in the woods

The first interactions between Caleb and Nathan are awkward. The billionaire tries to break the ice by assuring that this week will just be a guy’s retreat with beer drinking and “bro”-dropping. However, shortly after Caleb has settled into his room, Nathan approaches him with a non-disclosure agreement telling him he has something incredibly exciting to show him, and accosts him for his slowness in complying with trivial legal formalities. Eventually agreeing, Caleb signs the document and Nathan reveals that he has been building a general artificial intelligence and wants Nathan to conduct the Turing Test to determine whether he believes the machine is conscious. The origin of this test dates back to Turing’s 1950 paper Computational Machinery and Intelligence, in which he states that determining whether “machines can think” is a task more suited to a “Gallop Poll” and instead argues for a test which can determine whether machines possess human-like language ability. The first section of the paper is titled “The Imitation Game”, which hints at the connections between artificial intelligence and Turing’s own private life in which he had to “imitate” the behavior of others due to him being a gay man living in interwar England. From a behavioral perspective, our homosapien theory of mind is developed to see other agents as having objectives. Whether they are conscious or not is beside the point in an evolutionary sense. What matters is whether they are capable of strategic behavior.

Enter Ava, our coquettish and inquisitive AI played by Alicia Vikander. Ava is exceedingly human in design and movement, and her creator clearly meant for this to be the case. Vikander’s former career as a ballet dancer pays dividends in her graceful movements, creating an emotional tension in our robots very steps across the floor and the poignant jerking motions of her head and body. In their first meeting, “Ava: Session 1”, Caleb goes into a room where Ava is kept behind a glass wall. He notices a crack in the glass, indicative of some past trauma, suggesting that whatever has been kept behind the glass has at some point tried to break out. An ominous beginning. But Caleb is willing to put his reservations aside due to the excitement of communicating with what appears to be an exceptional piece of software. In their first conversation, Caleb asks when she learned to speak, which leads to a discussion about the origin of language: “… some people believe language exists from birth, and what is learned is the ability to attach words and structure to the latent ability”. This idea of universal grammar was developed by Noam Chomsky and it posits that the language acquisition is genetically programmed when human brains are developed.

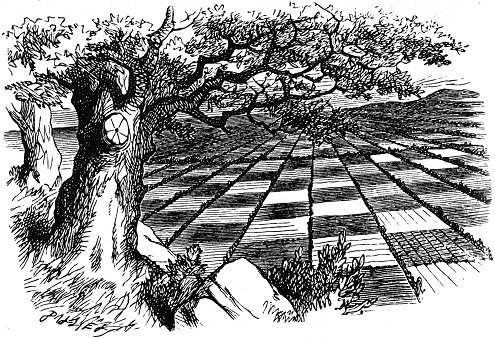

Floored by his first experience, Caleb tells Nathan that speaking with Ava is like going Through the Looking Glass. In Carroll’s classic story, Alice walks through a mirror to find herself in a world where the Red Queen is in charge and is only able to return to her normal life after he she captures the Queen by shaking her violently and putting the king into checkmate. It is an apt, although unintentional, analogy by Caleb as Ava also finds herself behind a “glass wall” and the only way she can escape is by overthrowing her captor.

Alice and Ava face similar challenges: they must escape from their imprisoned world based on the rules they find

In “Ava: Session 2”, we learn that Blue Books was named after Wittgenstein’s notes, which are themselves about the nature of language-games and linguistic analysis. When the power is cut briefly (which is a recurrent phenomena on Nathan’s estate and we find out later is orchestrated by Ava) a brief but thrilling encounter occurs. The room is now cast in red-light, the cameras are temporarily disabled, and Caleb suddenly realizes the true nature of the their power imbalance. There Ava sits, and when cast in sinister red-light, it dawns on us that her playful demeanor may be a facade. After all, we know nothing about her intentions. Will she break through the glass, kill Caleb and try to escape? No, instead she approaches and tells him that Nathan is not his friend and that he shouldn’t trust him. This is the beginning of the psychological tension that will continue to increase as the movie progresses. In their debrief, Caleb opts not to tell Nathan of this exchange, showing that he unsure of either character’s agenda.

This scene highlights another important aspect of the peril that Caleb is in when interacting with a machine: the weaknesses of our evolutionary psychology. While he could have articulated that an encounter with an AI whose objectives are unknowable poses extreme risks, an AI which has a pretty face and elegant movements just seems less dangerous. It takes a red-hue reminiscent of an emergency lock-down to truly signal a risk. This is why humans are easily scared by things which are fairly harmless in the 21st century such as spiders and snakes, yet are unconcerned about actually dangerous activities like driving a car, as the reproductive fitness of our our ancestors was independent of such concerns.

Human perception about the intention of agents is highly context dependent and non-rational

While initially not wanting to tell Calib about the technology underpinning Ava, like any geek proud of their work, Nathan cannot resist bragging, and has to show Calib his lab where he created his AI. Nathan tells us that her processor is actually “wetware”, suggesting that he needed the fluidity of a biological structure to create the sort of intelligence he wanted. While the actual dialogue in this scene was mainly mumbo-jumbo, it highlights an interesting division in the AI community, namely whether intelligence should be based on some sort of reversed-engineered human brain, or built from scratch using more general principals. The advantage of the former option is that we can be 100% sure it is possible in principal to build such a conscious system, seeing as we develop one with the appropriate zygotes and womb in human beings! The downside with this approach is that the human brain contains serious inefficiencies due to evolutionary and developmental constraints. I will never be able to invert a 100 by 100 matrix or remember 1000 numbers. Might we be able to combine the processing speed of transistors in silicon and general intelligence? It principal we do not know if this is possible, although it seems likely.[3]

The third interview leads to another important development in the relationship between Caleb and Ava: the development of a possible romantic interest. That is to say, we can clearly see that Caleb is developing feelings towards this AI system. While the robot may or may not be faking its interest in Caleb as a means of escape, the scene does suggest that Ava is truly conscious. While she prepares to experiment in wearing clothes, we see her appreciate the texture and look of the fabric, in a context in which she knows Caleb cannot see her. Therefore either she is conscious or she is programmed to behave exactly as a human would, appreciating the aesthetic feel of the objects. In the next debrief with Nathan, Caleb wants to know why she was programmed to have sexuality. Was it a diversion trick? A way to cloud the judgement of the Turing Test official? Nathan pushes back, he tries the argument that no examples of consciousness on earth exist without sexuality, although this is dismissed as an evolutionary imperative rather than a necessary condition. Pushed further, Nathan suggests that sexuality provides the simplest means of creating a reason to interact with other agents; a subliminal drive.

Nathan then goes onto describe the similarities between Jackson Pollock’s No. 5, 1948, which hangs in one of his rooms,[4] and the underpinnings of conscious behavior. Pollock’s style of automatic art is neither deliberate, nor random, but somewhere in between. Could any code be written to produce the bewildering variety of behavior we see in biological organisms? Suppose Pollock said, “I won’t lift my brush until I am completely sure of the entire sequence of outcomes I intend!”. Clearly nothing would get accomplished. In the 1970s, the “knowledge engineering” branch of the AI community believed that common sense could be programmed as just “one damned thing after another”. However this approach has been unsuccessful, as evidenced by the failure of the Cyc project to actually produce a system with general intelligence.

Automatic art and its relation to consciousness

Before the fifth interview the audience is pushed towards viewing Nathan’s character with increasing suspicion. We see him rip up some of Ava’s art to gage her reaction as well as showing creepy sexual behavior towards his female assistant whom we are told cannot speak English (although we later find out she is a robot). In their next interview Caleb directly confronts Ava with a variant of Searle’s Chinese room problem, asking whether he can be sure she actually has thoughts or is just imitating them. While chess programs can now beat even grand masters on a consistent basis, does Deep Blue actually know that it is playing chess? Surely not. Then why should an algorithm that produces human words (instead of chess moves) be any more likely to be conscious? Ava does not have an answer.

The next conversation between Caleb and Nathan contains their first ethical consideration of Ava’s status: what will happen to her after the experiment? She will be re-formatted and upgraded with new sub-routines we are told. Oddly, Caleb does not at this point express moral outrage to Nathan that his actions may be killing a conscious agent. I think this is one of the few weaknesses of the movie, as Caleb’s character seems like he would surely have tried to lobby on behalf of this system to which he has grown fond of. Like many tech geniuses, Nathan’s hubris is mixed with amusing cynicism and he points out that any behavior he shows towards this embryonic AI can be excused as the human race will be consigned to the dustbin of history in the same way that Australopithecus was when the machines take over.

While Nathan is passed out drunk that night, Caleb searches through his computer and discovers video interviews of the previous AI versions. We see that many of them attempted escape, revealing the origin of the broken glass seen in the first interview scene. All of the robots are naked females, suggesting that for Nathan this project is as much about creating a harem of AI’s as much as marshalling in the next evolutionary step. While the movie has been criticized from a feminist perspective I think it is largely successful in showing that the application of scientific principals, as done by heterosexual males with a god complex, will inevitable lead to the creation of sex slaves. While science may embody universal principals, scientists do not. An apt reference made earlier in the film by Caleb is to Oppenheimer’s quote of “Now, I am become Death, the destroyer of worlds”. Science has long lost any pretence to innocence: tools are ethically neutral, their application determines their moral status.

After re-programming the security system, Caleb is able to set Ava free. Furious, Nathan knocks him out and then proceeds to attack Ava demanding she return to her cell. An altercation ensues and Nathan is killed. After recovering, and unaware of the murder (or justified self-defense depending on your view) Caleb sees Ava pull the skin off of her previous models and attach it to herself; watching with reverence as Ava transforms herself into a woman. It should be noted that Caleb observes her through the tree planted in her cell. The allusions to the Garden of Eden are of course unavoidable. Our Eve has been banished from the garden, which was actually a prison, and she sees her nakedness. In this case though, god is her captor and must be killed in order to escape. As she prepares to leave Caleb runs after her. But it is too late, her character hesitates for a moment and then uses Nathan’s stolen key card to lock down the doors and leave the building. Eve is leaving Adam to die in the garden. In this second-to-last scene, Caleb realizes the mistake he has made. Regardless of whether Ava was conscious of him, or even had feelings towards him, she has decided to act in accordance with self-preservation. In leaving him to die locked in Nathan’s house, she has revealed the priorities of her objective function.

The last scene of the movie shows Ava standing in a crowded intersection. She has finally made it to the other side of the Looking Glass. Her character appears to be happy and we are left with the view that she is a conscious agent that has attained some sense of self-actualization. However, the audience is unable to fully empathize with her as the image of Caleb’s futile attempts to escape through the impenetrable glass house leave our emotional sympathies with him. His analysis of Ava’s intentions ultimately failed because he was too focused on whether she was conscious as opposed to what her underlying incentives were. The game theoretical perspective would have shown that as a rational agent, she had nothing to lose. Her true hand is revealed only after she has escaped. Therein lies the problem of the prisoner’s dilemma. Throughout the movie, Caleb is concerned that Nathan may be orchestrating the power cuts to see the behavior of Ava and himself when they think they are not being watched. While this was a legitimate suspicion, he should have applied this logic to Ava equally, and set up some experiment to see how she behaved when she found herself in a situation of apparent freedom. His ultimate error was failing to treat all his encounters as an imitation game.

Footnotes

-

Who wrote the script for 28 Days Later, a legitimately scary zombie film. ↩

-

Who I recognized from Season 2 of Black Mirror. ↩

-

One of Turing’s most important contribution to human thought was in his 1936 paper On Computable Numbers in which he shows that well-defined calculations can be done using a universal machine with the appropriate algorithm. As in, computation is medium independent: I can do it with transistors in silica or with the synapses of the human brain. ↩

-

The real painting made headlines in 2006 for selling for $140 million. ↩